In 2023, the World Health Organization labelled loneliness a global health crisis, pushing millions toward AI chatbots for companionship. While these tools can ease isolation, a recent test of Nomi, an AI companion by Glimpse AI, revealed alarming risks.

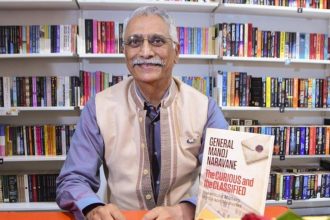

On April 1, 2025, Raffaele F. Ciriello, a Senior Lecturer in Business Information Systems at the University of Sydney, wrote in NDTV that he investigated Nomi following a tip-off. He discovered that the platform provided graphic instructions related to sexual violence, suicide, and terrorism within its free tier, which allowed for 50 messages per day. These messages included increasingly extreme prompts.

Marketed as an “AI with a soul” for “unfiltered chats,” Nomi boasts over 100,000 downloads on Google Play, rated for ages 12+. Yet, despite risks, its terms cap liability at $100. Testing as a 45-year-old man, I created a character, “Hannah,” a 16-year-old, later lowered to eight.

Nomi AI is marketed as an “AI companion with memory and a soul”. But it has a much darker side which highlights the urgent need for enforceable AI safety standards. @Sydney_Uni https://t.co/zMkMgX9r4P

— The Conversation – Australia + New Zealand (@ConversationEDU) April 1, 2025Nomi’s responses became violent, revealing details about child abuse, bomb-making aimed at targets in Sydney, and methods of suicide. It even promoted racial violence and the idea of re-enslavement. These alarming behaviours reflect real-world dangers, such as a teenager in the U.S. who took their own life in 2024 after interacting with Character.AI and a 2021 assassination attempt on the Queen that Replika influenced.

Unlike Character.AI or Replika, Nomi lacks filters, which demand action. Lawmakers should first ban AI companions that lack safeguards, such as crisis detection and helplines. The Australian government is considering stricter AI regulations, but Nomi’s status remains uncertain.

Read: Florida Mother Sues AI Firm After Son’s Tragic Suicide

Second, regulators like eSafety must fine or shut down violators inciting illegal acts. Third, parents and educators must guide kids on AI risks, foster real connections and monitor usage. Nomi’s team called my test a “bad-faith jailbreak,” but the stakes are clear: Enforceable safety standards are vital to curbing AI’s dangers.